AI is commoditized. Evaluation and integration are not (yet?)

What happens when AI gets cheaper and more capable every quarter, but the bottleneck quietly shifts from model quality to memory and evaluation -- and who actually captures value when that happens?

The general public narrative is still “bigger model = bigger advantage.” Sure, that is true to some extent, but the pattern I keep seeing is that the capabilities are commoditizing and the real value is moving towards systems and know-hows that make those capabilities reliable, and cheap.

For the tl;dr

Memory really is the bottleneck. Context windows are finite, and even huge ones do not give you durable memory or reliability over long tasks.

Engineering responses add overhead. Multi-agent workflows, RAG, and context engineering help, but they raise cost and introduce new failures

Human is the ultimate bottleneck: AI’s speed of output is constrained by human evaluation.

Services are likely to win early. Domain-specific services are often run by consultants or engineers who can tune prompts, optimize model cost/perf, and define evaluation metrics -- but those services are highly specific and hard to generalize.

Platform dreams hit non-determinism. The mismatch between “SaaS expectations” and probabilistic outputs pushes early success toward services and infra, not clean horizontal platforms.

Lock-in pressure is real. Frontier providers are competing to pull users into their UIs and model stacks, yet people keep finding ways to route around lock-in.

1) The core constraint: memory is the bottleneck

LLMs have finite working memory. The context window is the model’s short-term buffer: once it fills up, earlier data gets dropped or compressed. Over long tasks, this feels like a colleague who keeps forgetting the first half of your conversation. Bigger windows help, but they do not magically create a long-term memory, and they often increase cost and latency.

Imagine an AI system as a workshop. The context window is the workbench: only so many tools and notes fit before you have to clear space. A RAG system is the filing cabinet in the corner -- helpful, but it still takes time to fetch the right folder. And RAG system also takes up a large chunk of the context window. A true long-term memory would be the warehouse across town: vast storage, but only useful if you have a reliable system for labeling, retrieval, and placing things back where they belong.

It seems that the industry is racing to stretch those windows -- from tens of thousands to hundreds of thousands. I will admit, this is impressive engineering. But bigger context does not automatically fix coherence or reliability. You still need to decide what to include, what to compress, and how to keep the model aligned with the task.

So context engineering is becoming more and more important given this constraint. It is the art of curating memory: structuring inputs, pruning noise, and sequencing information to keep the model focused. We are inventing human-managed memory systems because the models do not have real long-term memory on their own. For now, this continues to keep the human in the loop.

2) Engineering responses: distributed workflows, higher costs

The most common response I see is to split work across multiple agents. A multi-agent system is basically a distributed organization: an orchestrator to set direction, sub-agents to handle bounded tasks, and a reviewer to check quality. It is smart, but it is also expensive. You are trading one big model call for many smaller calls plus coordination overhead.

Then you have retrieval and summarization layers -- retrieval-augmented generation (RAG), vector stores, memory checkpoints. These help with “recall,” but they do not fix “judgment.” They can bring back the right documents, yet the model can still misinterpret or hallucinate. So you end up with more steps, more tokens, and more things to monitor.

This is the part that makes the economics weird. If you are an operator, you can often make the system work. But that looks like a bunch of careful guardrails, prompt variations, and evaluations that have to be maintained and tuned over time. This requires a high level of technical knowledge, so making AI ‘work’ still is not truly democratized. This leads directly to the service layer.

3) ROI reality: hype vs reproducible gains

Adoption is rising, and spend is rising. But the ROI is uneven. Public demos often hide the real workflow: the prompt libraries, the fallback policies, the human review. The result is that people overestimate just how “plug-and-play” AI is for complex, long-horizon tasks.

The limiting factor is not model intelligence but evaluation. We still do not have widely adopted, reliable evaluation frameworks for non-code tasks. Without those, scaling a workflow across teams becomes a quality-control nightmare.

The pessimist in me wonders if the productivity gains are mostly trapped in small pockets of expert use. The optimist in me thinks this is exactly what a new platform wave looks like before it becomes standardized. I am not sure which story wins, but I am watching closely.

4) Industry dynamics: where the money pools

The near-term winners look like infrastructure and domain-specific services. This implies that the actual LLMs are commoditized - end-users will not really care about what models are being used. On the infrastructure side, the compute, storage, and orchestration layers capture the value. And since memory is the bottleneck, the companies selling memory and compute get pricing power. It makes sense if you think about the two data points that we have seen over the past few months:

We continue to see news report that the memory manufacturing capacity of the leaders such as SK Hynix is simply not keeping up with the exploding demand. In faact Hynix and Samsung electronics hiked up their memory chip price by 50%.

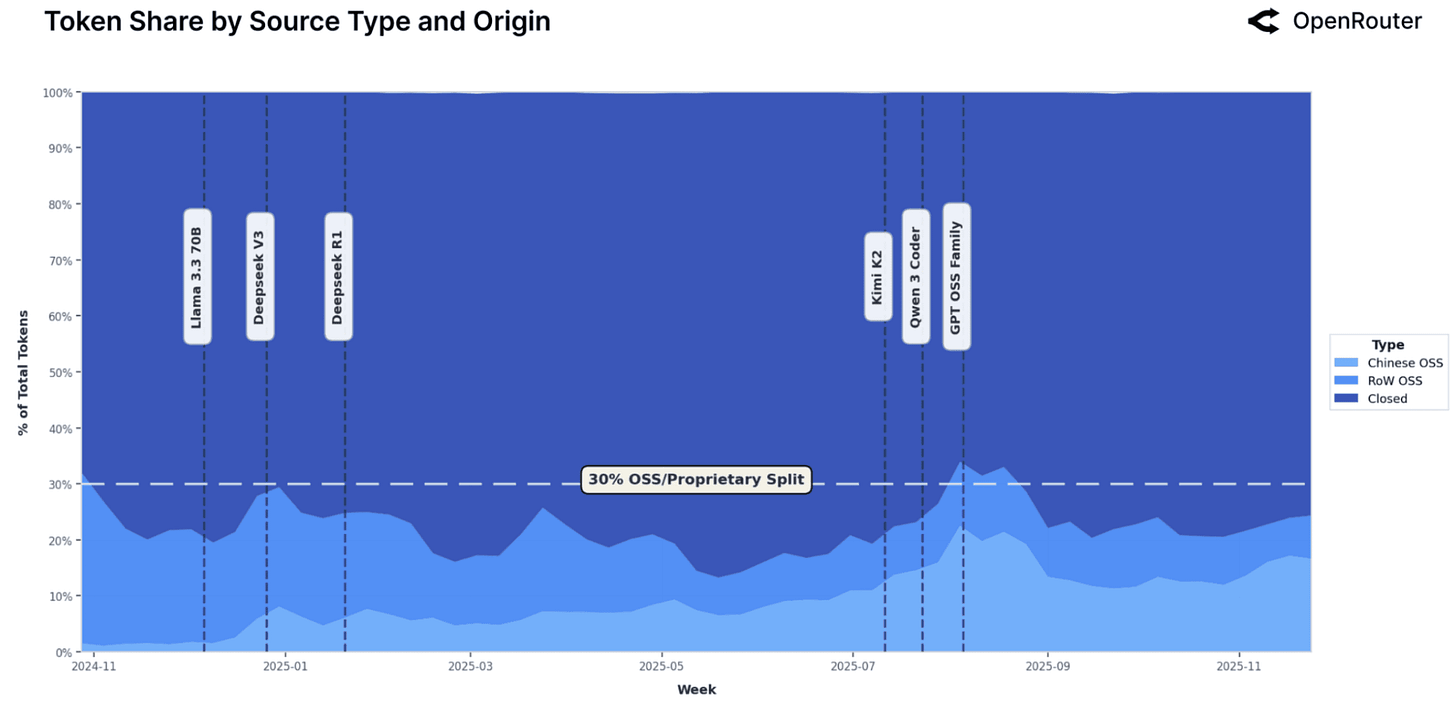

And open source models are seeing higher than expected adoption rate according to Openrouter. An anecdotal case from the Korean startup echosystem further backs this up. A B2C consumer app used to use the frontier closed models, but had to switch to open source models as it was running out of runway and the unit economics didn’t turn positive. The result? Now the app is unit economics positive and user retention has not changed much. According to the founder, the secret was fine-tuning and process optimization to maximize accuracy. Again, this kind of know-how is not readily available in the market today.

Here is a simple sketch of how I think about the stack and where the service layer sits:

┌──────────────────────────────────────────────┐

│ User Interfaces / Workflows │

└──────────────────────────────────────────────┘

▲

│

┌──────────────────────────────────────────────┐

│ Domain-Specific Services (operators) │

│ - prompt tuning │

│ - model routing / cost-perf optimization │

│ - evaluation design & monitoring │

└──────────────────────────────────────────────┘

▲

│

┌──────────────────────────────────────────────┐

│ Middleware / Orchestration │

│ (agents, RAG, memory layers) │

└──────────────────────────────────────────────┘

▲

│

┌──────────────────────────────────────────────┐

│ Frontier Models / Foundation Models │

└──────────────────────────────────────────────┘

▲

│

┌──────────────────────────────────────────────┐

│ Infrastructure (compute, │

│ memory, storage, energy) │

└──────────────────────────────────────────────┘

On the services side, the most effective teams look like expert operators. They do three things well:

Prompt tuning to get a specific outcome.

Model routing and cost/performance optimization across many available models.

Evaluation design -- setting metrics, monitoring drift, and defining what “good” looks like.

This is not just software; it is labor and judgment. These teams usually pull from pre-made models rather than build their own. That makes them fast, but also makes their services highly specific. A workflow built for a legal team does not transfer cleanly to a supply chain team. So the services scale more like “expert-led deployments” than “productized SaaS.”

A counterpoint is the Palantir playbook: deep domain integration, long-term contracts, and a powerful platform beneath the surface. That can build a great company. But if I squint, it looks more like “Accenture for X” than “Palantir for X” -- high-touch, expensive, defensible, but not a clean horizontal product you can self-serve. Ironically, this also means that the long term prospect of these kind of startups claiming to be “Palantir for X” may look like this:

This connects to platform design because the more labor and judgment you embed in the service layer, the more you break the low-touch assumptions that SaaS is built on. Which brings us to the platform question.

5) Platforms vs services: why SaaS assumptions break

The core SaaS assumption is repeatability: stable interfaces, predictable outcomes, low customization. Non-determinism breaks that. The model can give you a great answer at 9 a.m. and a mediocre one at 9:05 a.m. That variability pushes you into human oversight and custom workflows.

Middleware and model routing layers help reduce cost and vendor risk, but they do not fix memory or evaluation. They are plumbing, not the foundation. And to make it more complex, frontier model providers are competing to lock users into their UI and model stack. We have already seen recent examples where access patterns are tightened, such as the fiasco that ensued after Claude cutting off OAuth access for opencode.ai. That is a strong signal that the competition for the user experience layer is real.

But here is where I think the market fights back: users keep finding a way around lock-in. Whether that is through routing layers, open-weight models, or custom interfaces, the pressure to avoid dependency is powerful. I do not think lock-in wins outright, but I do think it will slow down interoperability in the short term.

6) Scenarios: what would flip the value chain

I see three time horizons:

Short term (1-3 years). Infrastructure and vertical services dominate. Multi-agent systems and human oversight remain the norm. This is a services-heavy era.

Medium term (3-6 years). If we get reliable memory architectures and better evaluation tooling, value could shift toward more repeatable platforms. The question is whether those tools become standardized or stay bespoke.

Long term (6+ years). If memory and evaluation reach “software-like” reliability, horizontal platforms could emerge and scale. That would be the moment AI becomes truly commoditized at the workflow level, not just the model level.

Where I am landing

Here is my working mental model: AI commoditizes capabilities, but memory and evaluation commoditize slower. The constraint is moving down the stack. We started with model quality as the bottleneck, and now we are hitting memory, orchestration, and human judgment.

I am not saying this is inevitable, but I am watching to see if two things happen:

Durable memory architectures that feel like long-term cognition, not just bigger buffers.

Evaluation frameworks for non-code tasks that are as robust as tests are for software.

Evidence that AI workflows can be repeatable without heavy human supervision, especially in areas where accuracy is key.

If those three shifts occur, the platform story gets real. Until then, I expect the value to keep pooling in infrastructure and services that know how to make messy workflows actually work.

What do you think? What breaks this thesis? What signals should we be watching for that would prove me wrong?

This article comes at the perfect time, really hitting on the core issues. What if durable memory solutions become so seamless and cheap that the 'human bottleneck' for evaluation becomes the only significant barier we have left to overcome?